An AGV that passes its factory acceptance test but fails in your warehouse is the most expensive kind of quality problem. Unlike static equipment, automated guided vehicles carry moving loads through dynamic environments — a navigation sensor miscalibrated by two degrees, an obstacle avoidance zone that's two centimeters too narrow, or a battery management system that logs false capacity will all show up as real operational failures after deployment. Catching these issues at the Chinese factory — before the unit ships — costs a fraction of a field retrofit or a liability claim from a workplace incident. This guide covers what a structured third-party pre-shipment inspection of AGV systems looks like, and what specific test criteria separate a unit that will perform reliably in the field from one that merely looks finished.

Key Takeaways

- Navigation accuracy must be measured with a physical tape or laser tracker — not accepted from the factory's own test log.

- Obstacle avoidance zones should be validated in three axis planes; a sensor that passes the frontal test may still fail on lateral or low-obstacle scenarios.

- Battery autonomy claims are only verifiable under rated load; idle-state discharge tests consistently overstate real-world runtime by 15–40%.

- BMS fault simulation — not just monitoring — is the test that reveals whether the battery management system will actually protect the unit or silently allow a fault condition.

Why AGV Inspection Is Different From Standard Machinery QC

Mobile Systems Fail Differently Than Fixed Equipment

Standard manufacturing equipment is tested at a fixed station: a press cycles, a conveyor moves product from A to B, a CNC spindle cuts a test piece. Failure modes are relatively contained. AGVs operate in open environments where the interaction between the vehicle's sensors, its navigation system, its load, and the surrounding infrastructure creates a much wider failure surface. A path-planning algorithm that works perfectly in the factory's test corridor may produce positioning errors the moment floor surface changes from polished concrete to epoxy coating — a scenario the inspector needs to replicate.

The ASTM F45 Committee on Driverless Automatic Guided Industrial Vehicles publishes the principal standards governing AGV performance testing, including ASTM F3218 for obstacle detection and ASTM F2503 for safety markings and physical protection. These standards define minimum test protocols that any quality inspection should reference. When sourcing AGVs from Chinese manufacturers, inspectors should request evidence that the factory's internal testing follows these benchmarks — and then independently verify the key parameters rather than accepting documentation alone.

The Three Systems That Determine Field Reliability

Across the AGV categories most commonly sourced from Chinese manufacturers — counterbalanced forklifts, tow vehicles, unit-load carriers, and narrow-aisle stackers — field reliability consistently traces back to three interdependent systems: navigation and positioning, obstacle avoidance and safety, and battery and power management. A failure in any one of these three will ground a unit. An inspection that checks only mechanical build quality while skipping these electronic and algorithmic systems is not an AGV inspection — it is a chassis inspection.

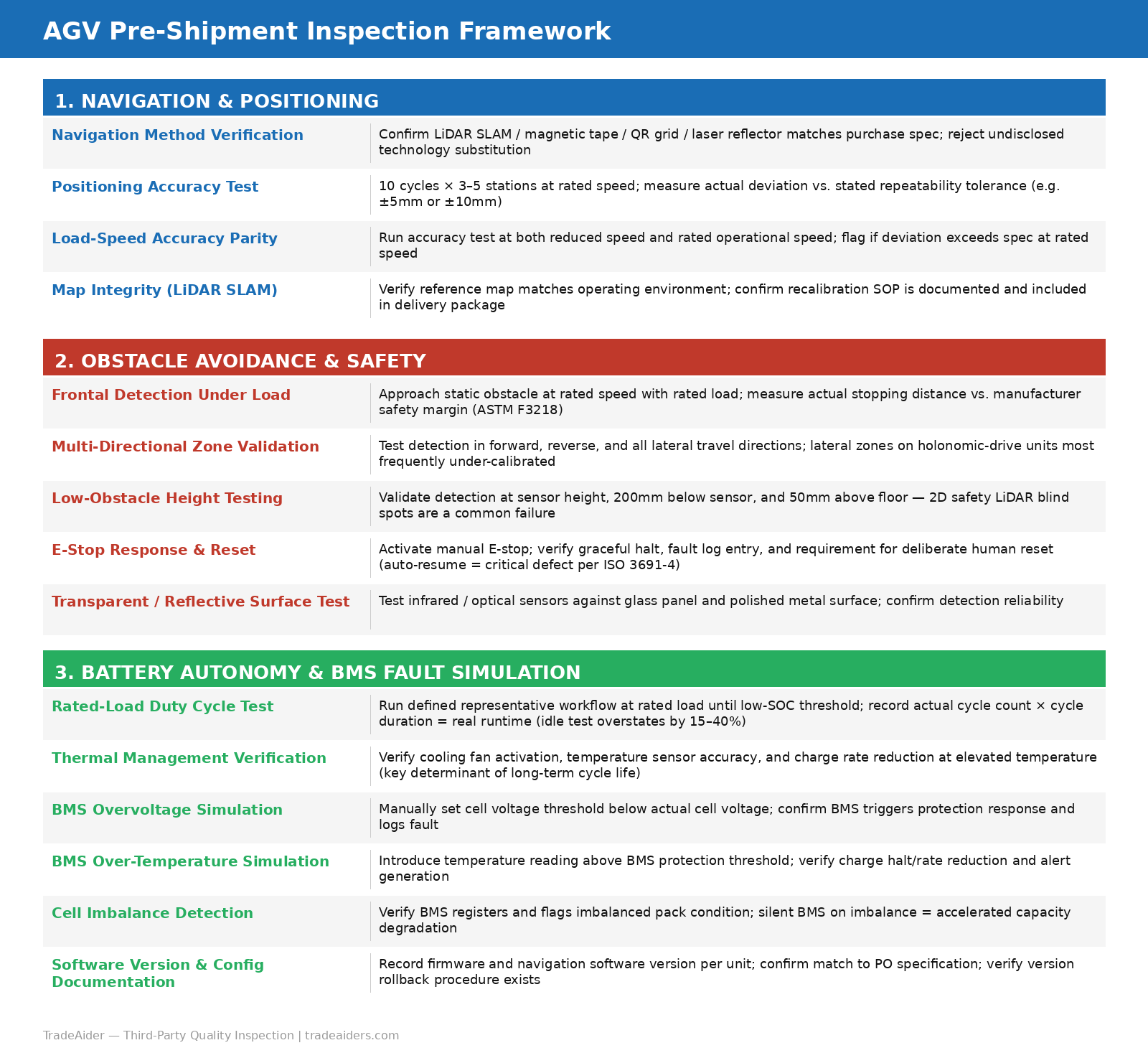

AGV Pre-Shipment Inspection Framework: three core system areas and their key test criteria

Navigation and Positioning Accuracy Tests

Understanding the Navigation Method First

The inspection protocol depends entirely on which navigation technology the unit uses. The four methods most common in Chinese-manufactured AGVs each have different failure modes and different test procedures:

- LiDAR SLAM (Simultaneous Localization and Mapping): the unit builds a map of its environment using laser point clouds. Most accurate and flexible, but vulnerable to map corruption and reflective surface interference. Test: verify map-building accuracy in the actual operating environment, not just the factory floor.

- Magnetic tape guidance: the AGV follows embedded magnetic strips. Simple and low-cost, but highly sensitive to tape damage, surface contamination, and installation tolerances. Test: verify sensor sensitivity at rated gap height; verify behavior at tape joins and intersections.

- QR code / barcode grid navigation: the unit reads floor-mounted codes to determine position. Common in AMR-style systems. Test: verify read accuracy at rated speed; test behavior when a code is partially obscured.

- Laser reflector guidance: fixed reflectors triangulate position. High accuracy in defined environments. Test: verify reflector placement tolerances and recalibration procedure documentation.

Before beginning the navigation test, the inspector should confirm which navigation method the unit uses and cross-check it against the purchase specification. Substitution of navigation technology — particularly downgrading from LiDAR SLAM to magnetic tape in cost-reduction exercises — is a known supplier deviation that changes the operational profile of the unit entirely.

Positioning Accuracy Measurement Protocol

Navigation accuracy is typically specified as a repeatability tolerance — for example, ±10mm or ±5mm — at designated stopping positions. Verifying this claim requires a physical measurement, not a review of the factory's test printout. The inspector marks three to five reference positions along the unit's test route and measures actual stopping position across ten consecutive cycles at each station. The deviation between the most extreme positions across all cycles defines the repeatability error. For AGVs interfacing with fixed infrastructure (conveyors, charging stations, rack systems), the relevant tolerance is the tightest interface clearance in the customer's facility — if that clearance is 20mm, a unit with ±15mm repeatability is operationally borderline even if it passes the manufacturer's ±10mm spec on a good test run.

Speed also matters. Many units achieve their stated accuracy at reduced speed but exhibit larger errors at rated operational speed. The test should be run at the speed the AGV will operate at in real use. Pre-shipment inspection conducted at the factory, before the unit leaves China, is the only practical moment to run multi-cycle navigation tests under controlled conditions — a field retrofit of a navigation system that fails post-delivery will cost far more in downtime and engineering time than the inspection itself.

Map Integrity and Recalibration Documentation

For LiDAR SLAM units, the inspection should also verify map integrity — that the reference map stored on the unit matches the environment it was built in, and that the recalibration process (what happens when a map drifts or a unit is relocated) is documented with a clear step-by-step procedure. Units that arrive without a recalibration SOP will require the customer to reverse-engineer the process after deployment, which creates avoidable downtime risk.

Obstacle Avoidance and Safety Zone Validation

Sensor Types and Their Blind Spots

AGV obstacle avoidance systems use three main sensor types, each with known gaps that the inspection must probe:

- Ultrasonic (sonic) sensors: reliable for large, flat obstacles but can miss thin objects, wire mesh, or narrow vertical structures. Test: approach a 50mm diameter vertical pole at rated speed — many ultrasonic arrays will fail to detect it.

- Infrared / optical sensors: excellent for close-range detection but vulnerable to ambient light interference and transparent or highly reflective surfaces. Test: detection reliability in high-ambient-light conditions and against a glass panel.

- LiDAR safety scanners: the most comprehensive option, but dependent on scan plane coverage. A 2D safety LiDAR mounted at 300mm height will not detect an obstacle at 100mm height (a pallet corner, for example). Test: detection at three height planes — at sensor height, 200mm below sensor, and 50mm above floor.

ASTM F3218, which covers obstacle detection performance for AGVs, specifies that detection zones should be validated at the stopping distance required to halt the vehicle under rated load. The practical test: load the AGV to its rated capacity, approach a static obstacle at rated speed, and measure the actual stopping distance. The vehicle must stop before the obstacle with the manufacturer's stated safety margin. This test is frequently omitted from factory acceptance tests because it requires a loaded test run, which some factories find logistically inconvenient — but it is the test that matters most for workplace safety.

Multi-Directional Zone Testing

Frontal obstacle avoidance is the minimum. A complete inspection validates safety zones in all directions the AGV can travel: forward, reverse, and any lateral movements for units with holonomic drive systems. Side-travel safety zones on multi-directional AGVs are consistently the weakest point found during inspection — manufacturers calibrate the frontal zone carefully and treat lateral zones as secondary, resulting in detection gaps that only appear during specific maneuvers in actual operation.

The inspector should also verify emergency stop (E-stop) function: manual activation of the E-stop, response time measurement, and confirmation that the unit requires a deliberate human reset rather than resuming automatically. Auto-resume after E-stop activation is a safety non-conformance under ISO 3691-4:2020, the international standard for industrial trucks including AGVs, and should be flagged as a critical defect requiring correction before shipment. During production inspection at the factory is the right stage to verify that safety software configuration matches the customer's facility requirements — not after the unit arrives on-site.

Battery Autonomy and BMS Fault Simulation

Why Idle-State Discharge Tests Are Misleading

A common gap in factory acceptance testing is that battery autonomy is measured by discharging the battery from a stationary vehicle — no motors running, no load, no movement. This method consistently overstates real-world runtime. Under rated load at operational speed, the actual power draw from the drive system, the navigation computer, the safety sensors, and the communication modules is typically 2–4x higher than idle draw. The result is that a unit documented as having "8-hour runtime" in the factory test may deliver 5–6 hours in real warehouse operation.

A proper battery autonomy test requires running the AGV through a defined duty cycle — a representative loop of the actual workflow the vehicle will perform in the customer's facility — at rated load, for a measured number of cycles, until the battery reaches the manufacturer's defined low-state-of-charge threshold. The total cycle count multiplied by cycle duration gives the actual operational runtime. This test takes time — typically 4–8 hours of continuous operation — but it is the only method that produces a defensible runtime figure. For buyers ordering AGVs for 24/7 warehouse operations, runtime shortfalls discovered after deployment translate directly into throughput gaps and unplanned shift extensions.

Industrial deployments of AGV fleets in heavy-use environments have demonstrated that lithium-ion batteries maintaining proper thermal management can sustain more than 3,500 charge cycles while retaining above 80% of rated capacity. The inspection should verify that the battery management system's thermal controls — cooling fan operation, temperature sensor readings, charge rate reduction at elevated temperature — are functioning correctly, since thermal management is the primary determinant of long-term cycle life.

BMS Fault Simulation: The Test Most Factories Skip

Battery management system quality determines whether a fault condition is caught and handled safely, or allowed to propagate. The inspection should verify BMS fault response through active simulation, not just by reviewing the monitoring dashboard. Three minimum fault simulation tests:

- Overvoltage trigger: manually set a cell voltage threshold below the actual cell voltage to confirm the BMS will trigger a protection response. The AGV should halt gracefully and log the fault.

- Over-temperature trigger: introduce a temperature sensor reading above the BMS protection threshold (typically 45–55°C depending on cell chemistry). The BMS should reduce charge rate or halt charging and trigger an alert.

- Cell imbalance detection: verify the BMS registers and flags an imbalanced pack — a condition that accelerates degradation but is often not surfaced until significant capacity loss has already occurred.

A BMS that passes all three of these simulations has demonstrated it will respond to real fault conditions. A BMS that only has monitoring capability — that shows data but does not trigger protective responses — should be documented as a non-conformance against the purchase specification if active protection was specified. For buyers sourcing AGV fleets from Chinese manufacturers, factory audit before production placement is the stage to verify whether the supplier has BMS testing equipment and a defined test procedure — a factory without BMS simulation capability has almost certainly not been testing this during production.

Build Quality and Mechanical Checks

Structural and Wiring Standards

Beyond the three core systems, the inspection should cover structural and build quality criteria that affect long-term reliability:

| Inspection Area | What to Check | Pass Criterion |

|---|---|---|

| Wiring harness routing | Cable routing through chassis, clamp spacing, bend radius at connectors | No cables within 50mm of moving parts; bend radius ≥ 5× cable diameter |

| Connector seating | All high-current connectors (motor, battery) fully seated and locked | Pull test: no movement at rated connector extraction force |

| Drive wheel condition | Tread depth, hardness, flatness across wheel surface | No flat spots; hardness within ±5 Shore A of specification |

| Fastener torque | Structural bolts on chassis, mast, and drive unit mounting | Torque wrench verification at ≥10 structural joints per unit |

| IP rating of electronics enclosures | Gasket condition, enclosure seal continuity, drain holes if applicable | Visual and tactile check; request IP test certificate from factory QA |

| Communication system | Wi-Fi / fleet management system connectivity; signal strength at simulated range | Stable connection at 50m line-of-sight; no packet loss above 0.1% |

Software Version and Configuration Documentation

AGVs ship with firmware and navigation software that directly determines their behavior. The inspection should record the exact software version installed on each unit, verify it matches the version specified in the purchase order, and confirm that a rollback procedure exists if the customer's site management system requires a different version. Software version mismatches between units in the same fleet create integration complexity that can delay deployment by weeks. Requiring version documentation during inspection, rather than discovering the mismatch after delivery, is a straightforward process control that many buyers overlook.

The TradeAider inspection standard provides reference criteria for electronic and mechanical verification across industrial equipment categories. For AGV orders involving multiple units, the inspection scope should include a sample from each production batch — batch consistency matters as much as individual unit performance, because a fleet of 10 AGVs with three different navigation calibration states will produce routing inconsistencies that are difficult to diagnose after deployment.

How to Structure AGV Inspection for Your Order Size

Sampling vs. Full Inspection

For small orders (1–5 units), full inspection of every unit is both practical and justified — the cost per unit is low relative to the total order value and the risk of a single non-conforming unit disrupting the deployment. For larger orders (10+ units), AQL-based sampling inspection is the standard approach. The AQL calculator allows buyers to determine the appropriate sample size for their order quantity and acceptable quality level. For high-value, safety-critical equipment like AGVs, an AQL of 1.0 or 0.65 is appropriate — accepting a higher defect rate to reduce inspection cost is a poor trade-off when the consequences of a navigation or safety failure are significant.

Phased Inspection for Complex Orders

For orders where AGVs are produced in batches across multiple weeks, during production inspection of the first production batch — before the full order is completed — allows buyers to catch systemic quality issues while there is still time to correct them. A navigation calibration error discovered in week one of a four-week production run can be corrected in the remaining three weeks; the same error discovered during pre-shipment inspection of the complete order requires either accepting the defect or holding the entire shipment for rework. The cost difference is substantial.

For new supplier relationships, a pre-production inspection that verifies the factory's test equipment, calibration records, and BMS simulation capability before production begins is the most cost-effective quality intervention available. A factory that cannot demonstrate functional BMS fault simulation equipment before production is unlikely to discover BMS defects during production — and those defects will arrive in your warehouse.

Frequently Asked Questions

What is the most commonly failed test during AGV pre-shipment inspection?

Battery autonomy under rated load is the most common failure point in our experience. Factories typically test battery runtime in a stationary, no-load condition, which consistently overstates real-world runtime by 15–40%. When an inspector runs the AGV through a representative duty cycle at rated load, actual runtime frequently falls short of the specification. The second most common failure is obstacle detection at non-frontal angles — lateral detection zones on multi-directional units are frequently under-calibrated relative to the frontal zone.

How do I verify navigation accuracy claims from the factory?

Request the factory's positioning accuracy test data first — this tells you what claim they're making and what test protocol they used. Then independently verify it: mark three to five stopping positions, run ten consecutive cycles, and measure actual deviation with a tape measure or laser distance tool. The independent measurement should match the factory's claim within a reasonable margin. If the factory claims ±5mm but your independent test shows ±18mm, the specification has not been met and the issue needs to be resolved before shipment.

Does AGV inspection need to cover software, or just the hardware?

Software configuration is a critical part of an AGV inspection. The navigation map, obstacle avoidance zone parameters, safety response thresholds, and BMS protection settings are all software-configured — and all of them can be set incorrectly without any visible sign on the hardware. An AGV that passes a hardware inspection but has incorrectly configured safety zones is a liability. Software version, configuration documentation, and functional testing of safety responses should be standard elements of any AGV inspection.

What standards govern AGV safety testing?

The primary international standards are ISO 3691-4:2020 (industrial trucks — driverless trucks and their systems) and the ASTM F45 series (driverless automatic guided industrial vehicles), particularly ASTM F3218 for obstacle detection and ASTM F2503 for safety markings. For AGVs operating in North American facilities, buyers should also verify compliance with ANSI/ITSDF B56.5, which covers safety requirements for automated guided industrial vehicles. Chinese manufacturers exporting to the US and EU markets should be able to provide conformity documentation against at least one of these standards — lack of any standards documentation is a supplier risk indicator.

How should I handle an AGV fleet order from a first-time Chinese supplier?

For a first-time supplier, the recommended approach is a three-stage inspection sequence: factory audit before production placement (to verify the supplier has the test equipment and processes needed to build to your specification), during production inspection of the first batch (to catch systemic issues while production is ongoing), and pre-shipment inspection of the complete order (to verify final units against your acceptance criteria). This sequence costs more upfront than a single pre-shipment inspection, but it dramatically reduces the risk of receiving a full fleet of non-conforming units — the scenario where inspection cost savings become irrelevant compared to rework, delay, and deployment disruption costs.

A systematic pre-shipment inspection is the only reliable way to verify that the AGVs leaving your Chinese supplier's factory will perform as specified in your facility. TradeAider's inspection service covers navigation accuracy measurement, obstacle avoidance zone validation, and battery autonomy testing under load — with a real-time report accessible during the inspection and an official PDF report delivered within 24 hours. See how TradeAider's pre-shipment inspection works → or use the Inspection Charge Calculator to estimate costs for your order.

Grow your business with TradeAider Service

Click the button below to directly enter the TradeAider Service System. The simple steps from booking and payment to receiving reports are easy to operate.