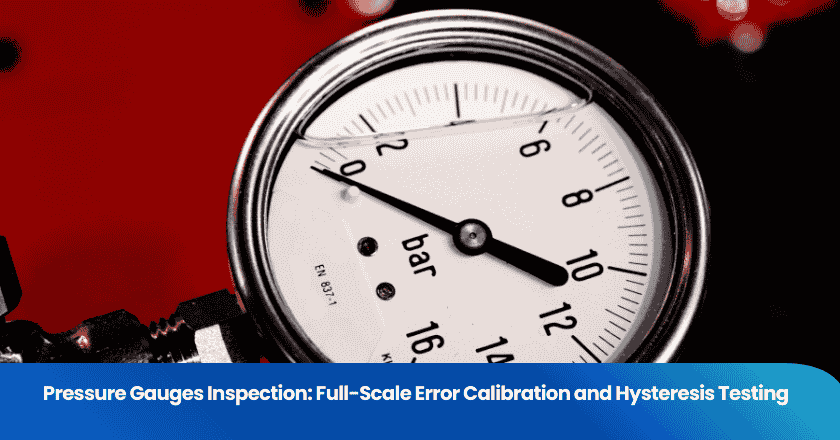

Pressure gauges are one of the most common instruments in industrial manufacturing, process plants, and hydraulic systems — and one of the most commonly accepted without adequate incoming inspection. A gauge that reads 5% high at full scale does not look wrong on the shelf. It looks like a gauge. It is only when a process runs at the wrong pressure, a safety relief valve fails to activate at the correct set point, or a hydraulic system produces unexpected forces that the gauge's error becomes consequential. By that point, the cost of the error is typically orders of magnitude larger than the cost of the inspection that would have caught it.

This guide covers the two most important accuracy tests for pressure gauge incoming inspection: full-scale error (span error) calibration and hysteresis testing. Together, these two tests characterize the accuracy of a gauge across its measurement range and reveal mechanical problems that a single-point check cannot find.

Key Takeaways

- Full-scale error (span error) calibration verifies that a gauge's reading is accurate across its full range, not just at a single reference point — it is the primary accuracy verification test.

- Hysteresis testing measures the difference between a gauge's reading when pressure is increasing versus decreasing — hysteresis reveals mechanical problems in the Bourdon tube or movement that span calibration cannot detect.

- The applicable accuracy class standard (ASME B40.100 in North America, EN 837-1 in Europe) defines the maximum permissible errors and the specific test protocol — always test to the class your application requires.

Pressure Gauge Accuracy Classes and What They Mean

Accuracy Class Definitions

Pressure gauge accuracy class defines the maximum permissible error (MPE) as a percentage of the gauge's full-scale range (also called span). A Class 1.0 gauge has an MPE of ±1.0% of full scale. A Class 0.5 gauge has an MPE of ±0.5% of full scale. For a gauge with a 0-100 bar range, a Class 1.0 designation means the gauge must read within ±1.0 bar of the true pressure at any point in its range.

The two dominant standards for pressure gauge accuracy classes are ASME B40.100 (used in North America) and EN 837-1 (used in Europe and much of the rest of the world). ASME B40.100 defines accuracy grades of 4A, 3A, 2A, 1A, A, B, C, and D, with 4A being the most accurate (±0.1% of span) and D the least accurate (±5%). EN 837-1 uses class designations of 0.1, 0.25, 0.6, 1, 1.6, 2.5, and 4, representing the MPE as a percentage of full scale. (ASME B40.100 — Pressure Gauges and Gauge Attachments)

Choosing the Right Accuracy Class for Your Application

The accuracy class required depends on the consequence of pressure measurement error in the specific application. Industrial process applications where pressure is used to monitor a process (not to control a safety system) typically use Class 1.0 or Class 1.6 gauges. Applications where pressure measurement directly affects safety system setpoints — pressure relief valve back pressure, hydraulic safety circuit pressure, vessel maximum allowable working pressure (MAWP) monitoring — require Class 0.5 or better. Laboratory and precision test applications use Class 0.1 to 0.25 gauges.

A common procurement error is buying gauges based on process pressure range without specifying the accuracy class. A gauge labeled "0-250 bar" from a Chinese manufacturer tells you the range. It tells you nothing about accuracy unless the class is explicitly specified in the purchase order and verified in inspection.

Test 1: Full-Scale Error (Span Error) Calibration

What Full-Scale Error Calibration Measures

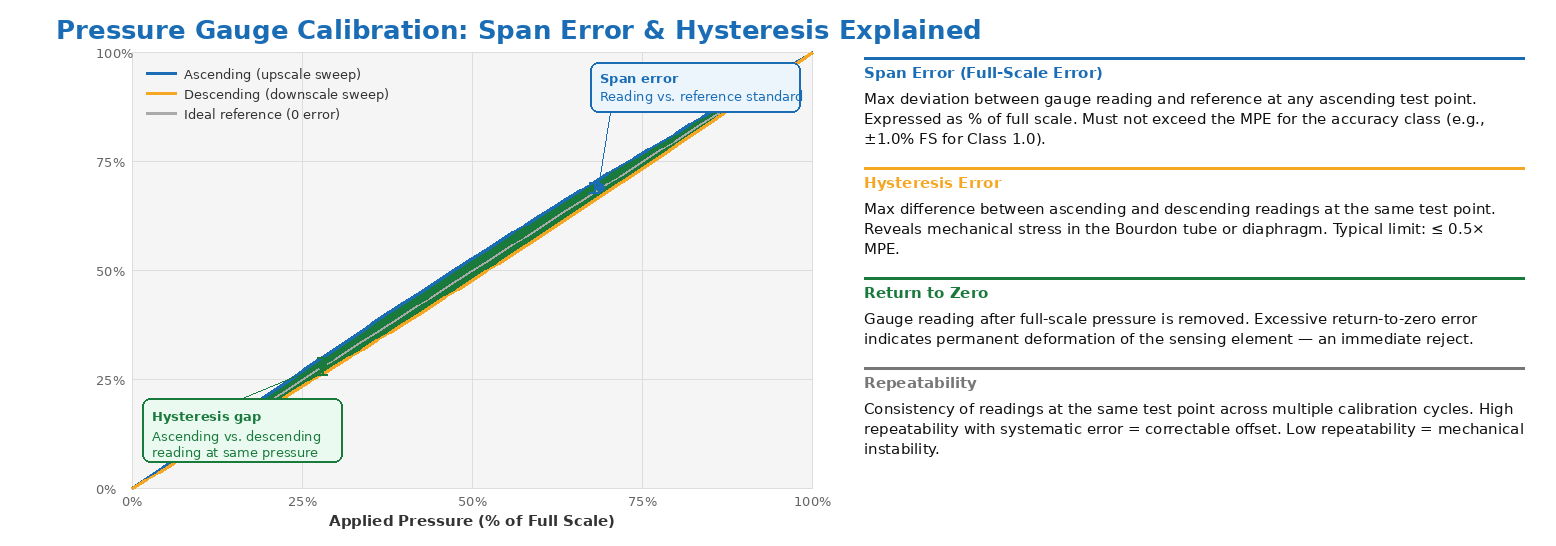

Full-scale error calibration compares the gauge's indication against a reference standard pressure source across the full measurement range of the gauge. The test applies a series of known pressures — typically 5 to 11 evenly spaced points from zero to full scale, including zero — and records the gauge reading at each point. The error at each point is calculated as the difference between the gauge reading and the reference pressure, expressed as a percentage of full scale.

The test is conducted in both the upscale direction (increasing pressure from zero to full scale) and the downscale direction (decreasing pressure from full scale to zero) — but the analysis of the upscale and downscale data is handled separately for the span error test and the hysteresis test. The span error (or full-scale error) is evaluated using the upscale readings only, or the average of upscale and downscale readings depending on the applicable standard.

Reference Standard Requirements

The accuracy of a calibration is limited by the accuracy of the reference standard used. The reference standard must have a known uncertainty that is significantly better than the MPE of the gauge being tested. The general calibration principle — often called the 4:1 test accuracy ratio (TAR) — requires that the reference standard's uncertainty be at least four times better than the gauge's MPE. For a Class 1.0 gauge (MPE ±1.0% FS), the reference standard must have uncertainty ≤ ±0.25% FS.

Common reference standards for pressure gauge calibration include deadweight testers (which generate pressure by loading calibrated weights onto a piston of known area — the most accurate and widely used primary standard for gauge calibration), precision digital pressure gauges with NIST-traceable calibration, and pressure calibrators with built-in sensors. All reference standards used in inspection should have current calibration certificates traceable to national measurement standards. (NIST — Pressure Calibration Services)

Test Point Spacing and the Importance of the Mid-Range Points

The selection of calibration test points matters significantly for characterizing a gauge's accuracy profile. A test that only checks 0%, 50%, and 100% of full scale may miss a mid-range deviation that would be revealed by testing at 20%, 40%, 60%, and 80%. EN 837-1 specifies test points at 0, 25, 50, 75, and 100% of full scale (minimum), while ASME B40.100 recommends testing at 0, 10, 20, 30, 40, 50, 60, 70, 80, 90, and 100% of full scale for a full calibration. For acceptance inspection of production gauges, a minimum of 5 to 7 test points is generally appropriate — more than 3 points (zero, mid, full scale) but fewer than the 11-point full calibration in most practical inspection contexts.

Zero Error and Its Implications

Zero error — the gauge's reading at zero applied pressure (atmospheric pressure for gauge-type instruments) — is sometimes adjustable via the gauge's zero adjustment screw and is occasionally adjusted to pass an inspection rather than being reported as an inherent error. A proper calibration procedure records the initial zero reading before any adjustment, performs the calibration sweep, and notes any zero adjustment made. A gauge that only passes calibration after zero adjustment has been made is a gauge that has a systematic error that was corrected — which is acceptable within limits, but should be documented so that the adjusted zero position is known and can be re-verified if the gauge is returned to service after removal.

Test 2: Hysteresis Testing

What Hysteresis Is and Why It Matters

Hysteresis is the difference between a gauge's reading when a specific pressure is approached from below (ascending pressure) versus when the same pressure is approached from above (descending pressure). A gauge with 1% hysteresis at mid-scale reads 1% of full scale differently depending on whether the process pressure is rising or falling — even though the true pressure is identical in both cases.

Hysteresis in a pressure gauge arises from several mechanical sources. In Bourdon tube gauges — the most common type for industrial applications — hysteresis is caused by internal stresses in the Bourdon tube that resist deformation slightly differently depending on direction. Manufacturing defects such as non-uniform tube wall thickness, residual stresses from forming, or inadequate annealing after manufacture increase hysteresis. In diaphragm gauges, hysteresis is caused by the elastic properties of the diaphragm material and its deformation history.

Hysteresis matters most in applications where the monitored pressure cycles regularly above and below a critical setpoint — a hydraulic press cycle, a pressure vessel fill-and-drain cycle, a pneumatic actuation circuit. In these applications, the gauge will read systematically different values depending on the direction of the most recent pressure change, introducing an error that changes sign depending on the process state.

Calculating Hysteresis Error

Hysteresis error is calculated at each test point by subtracting the downscale reading from the upscale reading at the same nominal pressure. The result is expressed as a percentage of full scale. The maximum hysteresis value across all test points is the gauge's hysteresis error, which is compared against the MPE for the applicable accuracy class.

Under EN 837-1, hysteresis is defined as the maximum difference between the ascending and descending readings at any single test point, and is included in the overall MPE budget along with other error components. Under ASME B40.100, hysteresis is evaluated as a separate error component from span error, with its own permissible limit depending on accuracy grade. For most industrial Class 1.0 and Class 1.6 gauges, maximum permissible hysteresis is approximately half to two-thirds of the total span MPE.

Figure 1. Pressure gauge calibration protocol: ascending and descending test points at equal pressure intervals. Span error uses ascending readings; hysteresis is the difference between ascending and descending readings at each point.

Full Calibration Test Protocol Summary

| Test Parameter | Specification | Applicable Standard | Pass/Fail Criterion |

|---|---|---|---|

| Span Error (Full-Scale Error) | Max deviation at any test point vs. reference | ASME B40.100 / EN 837-1 | ≤ MPE for accuracy class (e.g., ±1.0% FS for Class 1.0) |

| Hysteresis | Max (ascending − descending) at any test point | ASME B40.100 / EN 837-1 | Typically ≤ 0.5× MPE of accuracy class |

| Zero Error | Reading at zero applied pressure | ASME B40.100 / EN 837-1 | ≤ MPE; adjustable within gauge specification |

| Return to Zero | Reading after removing full-scale pressure | EN 837-1 | ≤ MPE from initial zero |

| Repeatability | Consistency across repeated test cycles | ASME B40.100 | ≤ 0.5× MPE between cycles |

| Overpressure Test | Apply 1.25× full scale; verify no damage | EN 837-1 | No damage; accuracy maintained within MPE after return to range |

Additional Inspection Checks Beyond Calibration

Visual and Mechanical Inspection

Calibration testing verifies accuracy but does not cover all failure modes that affect gauge reliability in service. A complete incoming inspection of pressure gauges should also include visual examination for case damage, lens cracks, or dial face defects; verification of the pressure connection thread specification and dimensional compliance (NPT, BSP, metric); checking the pointer attachment and zero adjuster function; and verification that the nameplate markings match the purchase order requirements — range, accuracy class, process connection type, material specifications, and relevant approvals (CE, ATEX, SIL, etc.).

For gauges intended for use in explosive atmospheres (ATEX Zone 1 or Zone 2), the inspection should verify that the ATEX certification marking on the gauge matches the purchase specification — not merely that a certification label is present. ATEX-labeled gauges from Chinese manufacturers have been found in the market with certification marks that do not correspond to valid certificates, a finding that a calibration test alone would not reveal. (ATEX Directive — European Commission)

Pressure Connection and Seal Integrity

Gauge leakage at the pressure connection is a failure mode that calibration testing, conducted using the gauge's pressure port connected to the reference source, should reveal — any gauge that leaks at the connection during calibration is an immediate reject. However, for gauges that will be used at elevated temperatures, in pulsating service, or with aggressive media, the seal material specification (Buna-N, PTFE, Viton) should be verified against the purchase requirement in addition to pressure-testing the connection.

Sampling Plans for Pressure Gauge Incoming Inspection

When to Use AQL Sampling vs. 100% Inspection

Pressure gauge incoming inspection is commonly conducted using AQL (Acceptable Quality Level) sampling — the same statistical sampling framework used for product quality inspection in consumer goods. The choice between AQL sampling and 100% inspection depends on the criticality of the application and the purchase quantity.

For safety-critical applications (gauges on pressure vessels, in safety instrumented systems, or on relief valve back-pressure circuits), 100% calibration is standard practice — sampling is not appropriate when a single non-conforming gauge in service could cause injury or equipment damage. For industrial process monitoring applications where the consequence of a reading error is operational rather than safety-related, AQL sampling at an AQL of 1.0 or 2.5 is a practical approach for large purchase quantities.

TradeAider's pre-shipment inspection services use AQL-based sampling per ANSI/ASQ Z1.4, which is directly applicable to gauge lot inspection. For safety-critical gauge applications requiring 100% calibration, the inspection can be coordinated with an accredited calibration laboratory. Use the AQL Calculator to determine the sample size for your gauge purchase quantity.

Common Findings in Pressure Gauge Incoming Inspection from China

The Most Frequent Non-Conformances

Quality control professionals conducting incoming inspection of pressure gauges sourced from Chinese manufacturers consistently find the same categories of non-conformance. Hysteresis failures are the most common accuracy-related finding — gauges that pass the upscale calibration sweep but show excessive deviation on the downscale return, revealing Bourdon tube mechanical problems that were not detected in the manufacturer's outgoing test (or that were detected but not reported). Accuracy class misrepresentation — gauges labeled Class 1.0 that test at Class 2.5 performance — is also a frequent finding in the mid-range gauge segment where cost pressure is highest.

Documentation non-conformances are common: missing or incomplete calibration certificates, certificates that reference test standards not appropriate for the gauge type, or certificates that record only a single test point rather than the multi-point test required by the applicable standard. A calibration certificate that records only the gauge reading at 50% of full scale is not a valid Class 1.0 calibration certificate — it is a data sheet entry.

Frequently Asked Questions

What is the difference between gauge pressure and absolute pressure gauges for calibration purposes?

Gauge pressure instruments measure pressure relative to atmospheric pressure — zero reading corresponds to local atmospheric pressure, not to zero absolute pressure. Absolute pressure instruments measure pressure relative to absolute zero (perfect vacuum). Calibration of gauge pressure instruments uses a reference pressure source that applies gauge pressure (above atmospheric), and the test is performed in the same atmospheric environment as the gauge will be used. Calibration of absolute pressure gauges requires a reference standard that can measure absolute pressure and typically requires an evacuated reference port. The calibration test protocol is different for each type, and the two types are not interchangeable in application or in calibration setup.

How often should pressure gauges be re-calibrated in service?

Re-calibration interval depends on the application, the required accuracy class, and the gauge's calibration history. For non-critical process gauges in stable service conditions, an annual re-calibration is typical. For gauges used in safety-critical applications or subject to pulsating pressure, high temperature cycling, or aggressive media, a 6-month interval is more common. The optimal interval is established based on calibration history — if a gauge consistently passes re-calibration with minimal drift, the interval can be extended; if it consistently drifts toward the MPE limit, the interval should be shortened or the root cause of the drift investigated.

Can I calibrate pressure gauges on-site, or do they need to go to a laboratory?

Pressure gauges can be calibrated on-site using portable calibration equipment — portable deadweight testers, pressure calibrators, or pressure gauge comparators — provided the reference standard has a current calibration certificate and the on-site environment is controlled adequately (primarily temperature). Laboratory calibration offers better environmental control and higher reference standard accuracy but requires removing the gauges from service. For large gauge populations, on-site calibration using a portable comparator checked against a laboratory-calibrated transfer standard is a common and practical approach.

What should a valid pressure gauge calibration certificate contain?

A valid calibration certificate should identify the gauge by serial number and range, state the reference standard used and its calibration traceability, record the measured value and reference value at each test point in both ascending and descending directions, state the calculated error and MPE at each point, record the ambient conditions during the test (temperature, humidity), state whether the gauge passed or failed, and be signed by the technician who performed the calibration with the date. Certificates that report only a "pass" result without the supporting test data are not valid calibration records and should be rejected.

Conclusion

Full-scale error calibration and hysteresis testing together provide a complete characterization of a pressure gauge's accuracy — span calibration verifies that the gauge reads correctly across its range, and hysteresis testing reveals the mechanical problems that calibration alone cannot see. For pressure gauges sourced from Chinese manufacturers, incoming inspection that includes both tests is the practical difference between accepting gauges that meet your accuracy class requirement and accepting gauges that look right but read wrong.

Whether you need AQL-based sampling inspection for large batches or 100% verification for safety-critical applications, TradeAider's inspection team can coordinate on-site gauge inspection with real-time reporting. Contact TradeAider to discuss your instrumentation inspection requirements, or use the Inspection Charge Calculator to estimate inspection costs for your next shipment.

Grow your business with TradeAider Service

Click the button below to directly enter the TradeAider Service System. The simple steps from booking and payment to receiving reports are easy to operate.